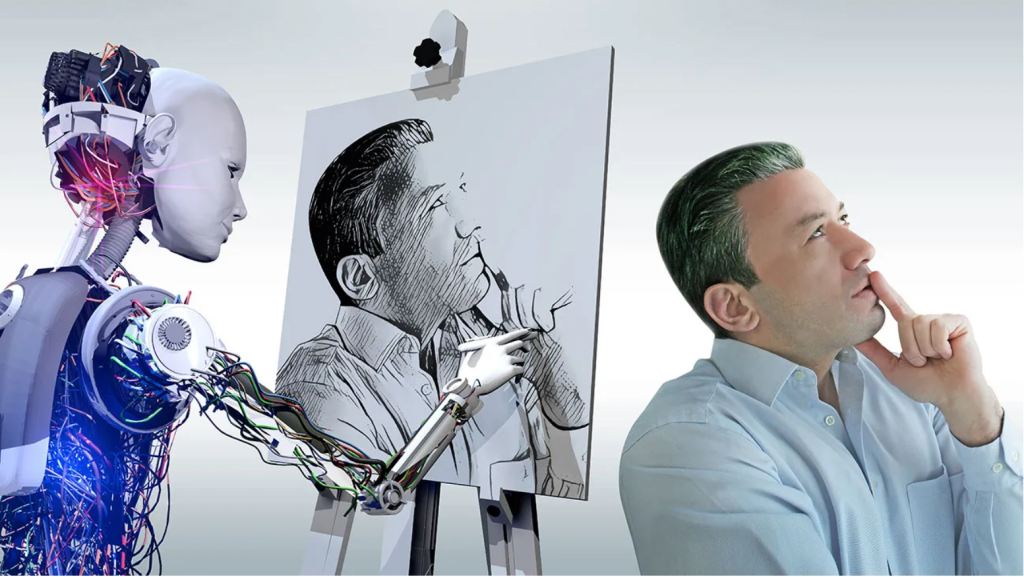

In today’s world, AI is everywhere, shaking hands with digital media and creative fields. From generating news stories to composing music and crafting art, AI’s fingerprints are all over the place. But just as we marvel at AI’s ingenuity and efficiency, we cannot ignore the legal and ethical dilemmas it brings, especially in the realm of copyright. Media industry creators are outraged to discover that AI serves not only as a muse, offering boundless inspiration, but also as a stealthy robber, pilfering their original works without leaving a trace.

Surprise on One Side, Anger on the Other

Generative AI is an artificial intelligence technique that can create various types of content, including text, images, audio, and synthetic data (Lawton, 2024). While the concept has roots in chatbots dating back to the 1960s, it wasn’t until 2014, with the extensive use of generative adversarial networks (Gans) and large language models (LLMS), that AI began to progressively identify patterns or symbols in data, producing text, photos, and videos of the highest quality in seconds (Janiesch et al., 2021).

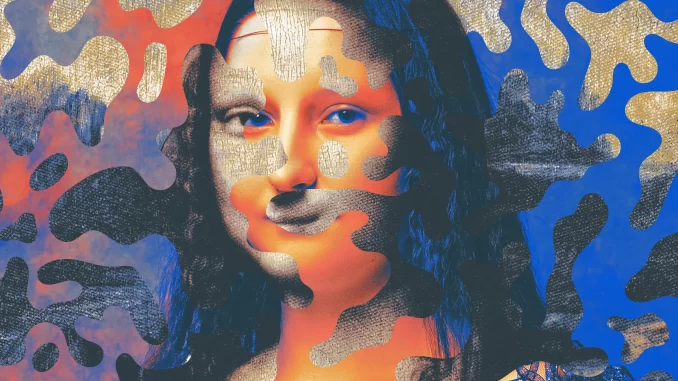

This is obviously exciting because people notice that the barriers to entry for artistic creation seemingly lowered. Stable Diffusion, a well-known image-generative AI, has attracted more than 200,000 developers in just two months of launch, with more than 1 million registered users from over 50 countries. Together, they created more than 170 million images (Wiggers, 2022). Imagine that even if you are an amateur, you can swiftly create stunning works in the style of Monet by Stable Diffusion to create a beautiful painting in the style of Monet in a short time. The change is nothing short of revolutionary.

The official website picture of Stable Diffsion

At the same time, for professional artists, AICG also brings both surprises and conveniences. Anna Vaus, a musician, has created beautiful country music with poetic lyrics generated by AI (Vaus, 2022). “At first, it was exciting and surreal,” illustrator Kelly McKernan tweeted in December 2022.

She did not anticipate, though, that she would later join several angry artists to sue Stability AI and Midjourney, two companies specializing in image-generating AI (Dixit, 2023).

The problem clearly arises from the operational logic behind generative AI: an AI system cannot recognize anything without extensive computational training on large datasets or predefined rules (Crawford, 2021). Because the data that AI had gathered was originally generated by humans, when some creators discovered that their work had been stolen by AI without their knowledge, the initial surprise quickly turned to extreme anger.

Illustrator Hollie Mengert, for instance, found her painting style added to Stable Diffusion without permission (Baio, 2023). In October 2022, artists Daniel Danger and Tara McPherson, among others, revealed that their images were used without consent or compensation to train data models of image-generating AI (Metz, 2022). Similarly, China’s UCG platform LOFTER has faced backlash over its AI drawing function, as some of the images it generated were found to be very similar to works uploaded by artists on the platform (Zhao, 2023).

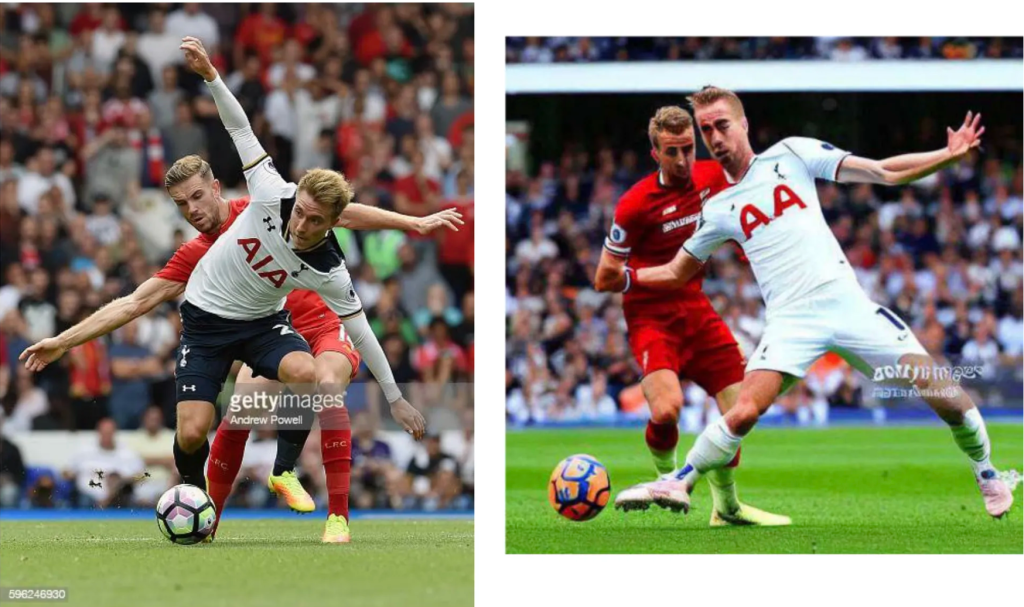

Artwork by Hollie Mengert (left) vs. images generated with Stable Diffusion DreamBooth in her style (right)

“This technology, generative AI, has effectively destroyed entire career paths for today’s most talented and passionate living artists, and this development has accelerated the scarcity of independent artists like me,” McKernan said.

Now, an increasing number of artists are opposing AI art. Image website ArtStation is littered with the same image repeatedly posted by different users: a large red “No” sign covered with the word “AI”, signaling a collective rejection of AI-generated images (Xiang, 2022).

“No AI Art” images posted by artists started to dominate the trending section of ArtStation following the platform’s refusal to ban AI-generated artwork

“I don’t want to participate at all in the machine that’s going to cheapen what I do,” Daniel Danger said.

Dilemma Source: The Challenge of Defining the Creative Process

However, when artists aim to safeguard their rights under copyright law, they hit a roadblock. Studies have shown that to avoid copyright infringement, large language models by dissecting training data elements and recalling possible features and relationships rather than a single full image (Sag, 2023). Furthermore, the massive language models used by AI businesses still lack complete transparency, resembling a “black box” (Pasquale, 2016). As a result, generative AI is more likely to imitate the painting style, making it challenging for creators to produce works that fit the criterion of “plagiarism” through it and limiting their ability to convincingly argue that their works have been stolen.

On the other hand, creators who use AI also feel quite aggrieved. Because AI creation is not a purely mechanical process, it necessitates users to provide detailed instructions and descriptions to generate corresponding works. This, to some extent, aligns with copyright law, which seeks to reward the unique and subjective contributions of human creators (Lucchi, 2023). In August 2022, an artwork created by Midjourney took first prize in an art competition in the US (Metz, 2022).

Jason Allen’s A.I.-generated work, “Théâtre D’opéra Spatial,” took first place in the digital category at the Colorado State Fair

Although Jason M. Allen, the work’s author, underscored the complexity of the creative process, revealing that he generated 900 images and ultimately spent over 80 hours refining the artwork, the award is still highly controversial. “It’s like letting robots compete in the Olympics,” angry voices chimed in, questioning the extent to which Allen relied on external databases to fuel his creation.

Key issue: AI Copyright Disputes Over “Input” and “Output”

The dilemma has thrust two key AI copyright issues into the spotlight:

Firstly, how do we protect the copyright of works utilized in AI training datasets (the angry “input”)? Secondly, can creations generated by artificial intelligence (the surprise “output”) be copyrighted?

It’s important to note that when an artist whose work has been stolen by AI also employs AI to create, these two issues will intertwine, creating a complex web of copyright disputes.

Answer from Both Sides

The Punch of the ‘Input’: Legal Proceedings and Preemptive Defense

Even if there is no overwhelming evidence that generative AI is brazenly stealing the content of works, an increasing number of “input parties”, particularly companies and platforms with extensive creative resources, are joining the legal battle to safeguard their copyright rights.

In January 2023, image company Getty Images sued Stability AI for $1.8 trillion, alleging the unauthorized copying and processing of millions of copyrighted images and related data held by Getty Images, including company trademarks (Vincent, 2022).

An illustration from Getty Images’ lawsuit, showing an original photograph and a similar image (complete with Getty Images watermark) generated by Stable Diffusion

At the end of 2023, Open AI and Microsoft were sued by the New York Times for their text-generating AI. The New York Times accused them of exploiting millions of New York Times articles to train AI models, report the content word for word, and mimic the story’s presenting style, thus impacting their connections with readers and advertisers (Roth, 2023). In addition to this, OpenAI and Microsoft were also sued in 2024 by individual non-fiction writers Nicholas Basbanes and Nicholas Gage for “stealing” content from their work without compensation (Mangan, 2024). On the music front, major publishers like Universal Music are taking aim at Anthropic, claiming the illegal use of copyrighted lyrics in training its chatbot, Claude AI (Brittain, 2024).

However, litigation is far from easy, given the constraints of time, money, and competing interests. Therefore, the “input party” is also exploring diverse defenses to protect their copyright before the work is published. In August 2023, The New York Times blocked GPTBot, OpenAI’s crawler launched earlier that month, preventing it from using the publication’s content for AI model training (Peters & Davis, 2023). Meanwhile, Ben Zhao, a computer science professor at the University of Chicago, has developed a new tool called Glaze that aims to prevent AI models from learning a particular artist’s style. By uploading their work to Glaze and selecting a different art style, artists can render their creations unrecognizable to AI models, making them unusable as training data (Glover, 2024).

The Striving of the ‘Output’: leveraging Copyright Law Innovation

For creators who use AI to produce their work, the hope lies in leveraging copyright law innovation to defend their creations. Just as early in the history of the camera, photographs were regarded as the work of machines rather than creative expression, they argue that today’s AI works are in the same position as photographs from a hundred years ago.

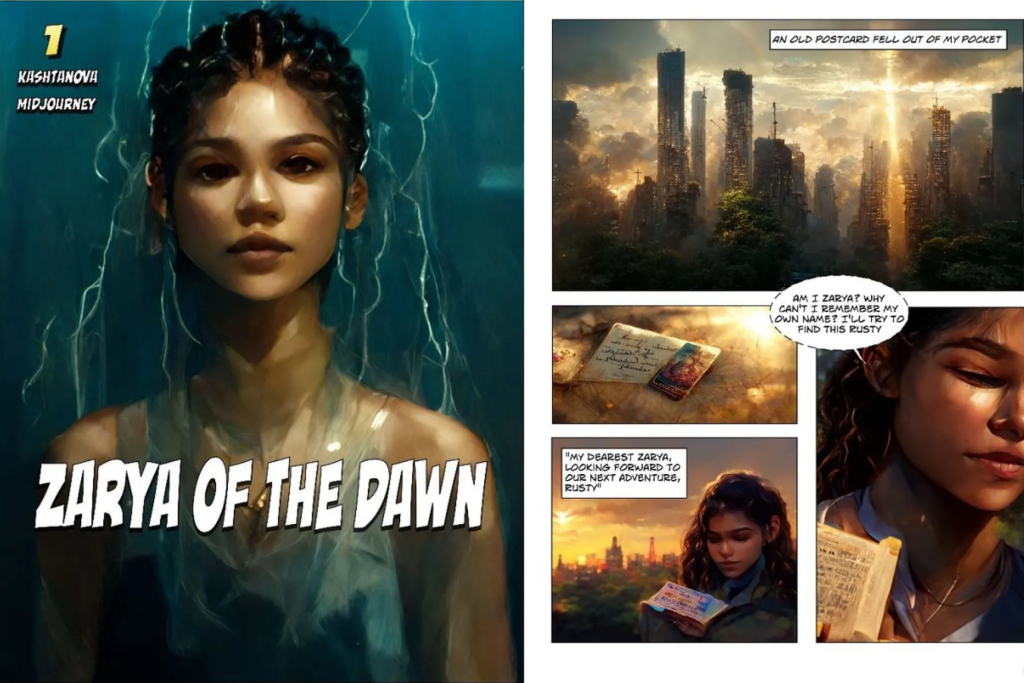

One of the most typical examples is the first artificial intelligence art copyright registration in the United States. Artist Kristina Kashtanova utilized text-to-image AI Midjourney to create a full-length 18-page comic book, Zarya of the Dawn, which received registration approval from the U.S. Copyright Office in September 2022 (Edwards, 2022).

the cover page and the second page of Zarya of the Dawn

Although the copyright registration of the work was partially canceled after five months, only the written story and the arrangement of the images in it were copyrighted, it was still “good news” for the author Kashtanova, as it provided a new direction for people in the AI art world (Brittain, 2023).

In August 2023, the U.S. Copyright Office issued a new policy change on this basis, emphasizing that the term “author” does not apply to non-humans, including machines, and that if a human simply enters a cue and the machine produces a complex written, visual, or musical work in response, then “traditional elements of authorship” are already being performed by a non-human AI. Therefore, AI-generated art is not protected by copyright, which has been confirmed by a US federal court (Brittain, 2023).

However, interpretations vary across countries.

The UK is one of the few countries that has provided copyright protection for computer-generated works since the explosion of generative AI in 2022 (UK Government, 2022). In 2024, the UK government added a response stating that using copyrighted works as AI training data constitutes copyright infringement unless licensed or exempted (Dennis, 2024).

For Europe, the Parliament approved the Artificial Intelligence Act (AI Act) in 2024. The regulation respects existing EU copyright law and applies to the entire AI cycle from the input level to the output level. This means that copyrighted works on the input side cannot be identified at the output level without a copyright exemption or owner consent (Guadamuz, 2024).

It is also worth noting that in December 2023, a Chinese court ruled for the first time that AI-generated images are protected by copyright. The plaintiff sued an online blogger for using his work without permission, which was created by Stable Diffusion. The release of the ruling is expected to mark a substantial policy endorsement by China of the AI industry, experts said (Shen, 2024).

The Future: Transnational Law and New Definitions

The varying court rulings on AI copyright across different countries demonstrate that governments have differing perspectives on the advancement of the AI industry. However, AI is a global issue, and the realm of generative AI knows no borders. Looking ahead, we may witness an increase in transnational legal rulings on AI and the emergence of linkages between international laws. To achieve better regulation, currently, national legal bodies need to establish more detailed and precise definitions of AI copyright in the context of diverse litigation.

For the tech giants behind generative AI, they’re not just wading through complaints from the “input party”,but also looking for ways to smooth things over, such as implementing a compensation plan. OpenAI CEO Sam Altman has promised that if artworks on the image platform Shutterstock are used in AI models, the authors of those works will be paid accordingly. Bria, a generative AI firm, pays royalties based on output, which means that content producers receive a fixed price every time their copyrighted work is used by an AI system. “If someone creates a particular piece of art in the style of the artist, then the artist will have the right to decide how much money he wants to make on that synthetic creation. Then we will split the revenue,” explains Yair Adato, the company’s co-founder and CEO (Glover, 2024).

This is despite the fact that there has always been criticism of AI, with critic Jonathan Taplin stating that “the rise of digital giants is directly related to the decline of creative industries” (Taplin, 2017, p. 6). But obviously, the generation of new things is a sign of growing artificial intelligence, and affecting the creative industry is an inevitable result. Muses and robbers are two sides of the same coin when it comes to AI. The crucial question for us is how to learn to use AI to reshape our world and balance the interests of all parties. This might call for a new definition of AI, new actions, and, of course, the courage and determination to make changes. So, what are you going to do?

Reference:

Baio, A. (2023, November 15). Invasive diffusion: How One unwilling illustrator found herself turned into an AI model. Waxy.org. https://waxy.org/2022/11/invasive-diffusion-how-one-unwilling-illustrator-found-herself-turned-into-an-ai-model/

Brittain, B. (2023a, February 23). AI-created images lose U.S. copyrights in test for New Technology. Reuters. https://www.reuters.com/legal/ai-created-images-lose-us-copyrights-test-new-technology-2023-02-22/

Brittain, B. (2023b, August 22). AI-generated art cannot receive copyrights, US Court says. Reuters. https://www.reuters.com/legal/ai-generated-art-cannot-receive-copyrights-us-court-says-2023-08-21/

Brittain, B. (2024, February 16). Music publishers fire back at anthropic in AI copyright lawsuit. Reuters. https://www.reuters.com/legal/litigation/music-publishers-fire-back-anthropic-ai-copyright-lawsuit-2024-02-15/

Crawford, K. (2021). Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence. New Haven: Yale University Press. https://doi.org/10.12987/9780300252392

Dennis, G. (2024, January 19). UK government AI report confirms decision on Protection of Copyright Works. Pinsent Masons. https://www.pinsentmasons.com/out-law/news/ai-report-confirms-decision-on-protection-of-copyright-works

Dixit, P. (2023, January 20). “it’s gross to me”: The trio of artists suing AI Art Generators Speaks Out. BuzzFeed News. https://www.buzzfeednews.com/article/pranavdixit/ai-art-generators-lawsuit-stable-diffusion-midjourney

Edwards, B. (2022, September 22). Artist receives first known US copyright registration for Latent Diffusion AI art. Ars Technica. https://arstechnica.com/information-technology/2022/09/artist-receives-first-known-us-copyright-registration-for-generative-ai-art/

Glover, E. (2024, February 28). Ai-generated content and copyright law: What we know. Built-In. https://builtin.com/artificial-intelligence/ai-copyright

Guadamuz, A. (2024, March 19). The EU AI Act and copyright. TechnoLlama. https://www.technollama.co.uk/the-eu-ai-act-and-copyright

Janiesch, C., Zschech, P., & Heinrich, K. (2021). Machine learning and deep learning. Electronic Markets, 31(3), 685–695. https://doi.org/10.1007/s12525-021-00475-2

Lawton, G. (2024, January 18). What is Generative AI? everything you need to know. Enterprise AI. https://www.techtarget.com/searchenterpriseai/definition/generative-AI

Lucchi, N. (2023). CHATGPT: A case study on copyright challenges for Generative Artificial Intelligence Systems. European Journal of Risk Regulation, 1–23. https://doi.org/10.1017/err.2023.59

Mangan, D. (2024, January 5). Microsoft, OpenAI sued for copyright infringement by nonfiction book authors in class action claim. CNBC. https://www.cnbc.com/2024/01/05/microsoft-openai-sued-over-copyright-infringement-by authors.html#:~:text=In%20September%2C%20a%20group%20of,writers%20in%20Manhattan%20federal%20court.

Metz, R. (2022a, September 3). AI won an art contest, and artists are furious | CNN business. CNN. https://edition.cnn.com/2022/09/03/tech/ai-art-fair-winner-controversy/index.html

Metz, R. (2022b, October 21). These artists found out their work was used to train AI. now they’re furious | CNN business. CNN. https://edition.cnn.com/2022/10/21/tech/artists-ai-images/index.html

Pasquale, F. (2016). The Black Box Society the secret algorithms that control money and information. Harvard University Press. https://doi.org/10.4159/harvard.9780674736061.c8

Peters, J., & Davis, W. (2023, August 21). The New York Times blocks OpenAI’s web Crawler. The Verge. https://www.theverge.com/2023/8/21/23840705/new-york-times-openai-web-crawler-ai-gpt

Roth, E. (2023, December 27). The New York Times is suing OpenAI and Microsoft for copyright infringement. The Verge. https://www.theverge.com/2023/12/27/24016212/new-york-times-openai-microsoft-lawsuit-copyright-infringement

Sag, M. (2023). Copyright safety for generative AI. SSRN Electronic Journal. https://doi.org/10.2139/ssrn.4438593

Shen, X. (2024, January 15). Why a Chinese court’s landmark decision recognising the copyright for an AI-generated image benefits creators in this nascent field. South China Morning Post. https://www.scmp.com/tech/tech-trends/article/3248510/why-chinese-courts-landmark-decision-recognising-copyright-ai-generated-image-benefits-creators

Taplin, J. (2017). Move Fast and Break Things. New York: Little, Brown. https://doi.org/10.1145/3244026

UK Government. (2022, June 28). Artificial intelligence and intellectual property: Copyright and patents. Retrieved from https://www.gov.uk/government/consultations/artificial-intelligence-and-ip-copyright-and-patents/artificial-intelligence-and-intellectual-property-copyright-and-patents#:~:text=Works%20generated%20by%20a%20computer,be%20protected%2C%20as%20described%20above

Vaus, J. (2022, August 9). There’s a Digital Tear in my virtual beer: Writing a country song with AI. Built In. https://builtin.com/media-gaming/ai-songwriting

Vincent, J. (2022, September 21). Getty Images bans AI-generated content over fears of legal challenges. The Verge. https://www.theverge.com/2022/9/21/23364696/getty-images-ai-ban-generated-artwork-illustration-copyright

Wiggers, K. (2022, October 17). Stability AI, the startup behind Stable Diffusion, raises $101M. TechCrunch. https://techcrunch.com/2022/10/17/stability-ai-the-startup-behind-stable-diffusion-raises-101m/

Xiang, C. (2022, December 14). Artists are revolting against AI art on artstation. Vice. https://www.vice.com/en/article/ake9me/artists-are-revolt-against-ai-art-on-artstation

Zhao, H. (2023, March 10). Creatives in China are pissed about Lofter’s A.I. art generator. Radii. https://radii.co/article/lofter-under-fire-as-creators-protest-ai-art-generators

Be the first to comment