Have you ever thought about the fact that prejudice and discrimination do not only exist in the human world?

With the rapid development of AI (artificial intelligence) applications, many opportunities have emerged in the global atmosphere, from facilitating health diagnostics to enabling interpersonal relationships through social media to using AI automation to improve work efficiency (Ethics of Artificial Intelligence, 2024).

However, these rapid changes have also raised profound ethical and governance issues. For example, there are growing concerns about the potential for bias and the discriminatory nature of AI.

What is AI bias and discrimination?

AI bias refers to an anomaly in the results produced by machine learning algorithms. This anomaly is caused by biased assumptions during the development of the algorithm or by biases in the training data(Dilmegani, 2024).

AI systems are biased for two reasons. The first reason is cognitive bias, an unconscious error in thinking that results from the brain’s attempt to simplify the processing of information about the world so that it influences personal judgment and decisions. Developers will incorporate cognitive biases into AI learning by creating models and using training datasets that contain biases. The second reason is the need for complete data. If the data is incomplete, AI predictions are not representative and contain biases (Dilmegani, 2024).

AI bias can hinder the participation of individuals in the economy and society and ruin the success of businesses if it is not addressed. Bias therefore diminishes the potential of AI by reducing its accuracy. Distrust from marginalized groups, including people of colour, women, people with disabilities and the LGBTQ community, can be exacerbated by AI bias scandals (Holdsworth, 23 C.E.).

Uncovering AI’s invisible bias and discrimination

The four most common and worrying biases found in AI applications are racism, sexism, ageism, and ability biases(Haritonova, 2023). Racism and sexism are the most common.

Racism

Racism in AI is the phenomenon of AI systems showing an unfair bias towards certain racial or ethnic groups(Haritonova, 2023).

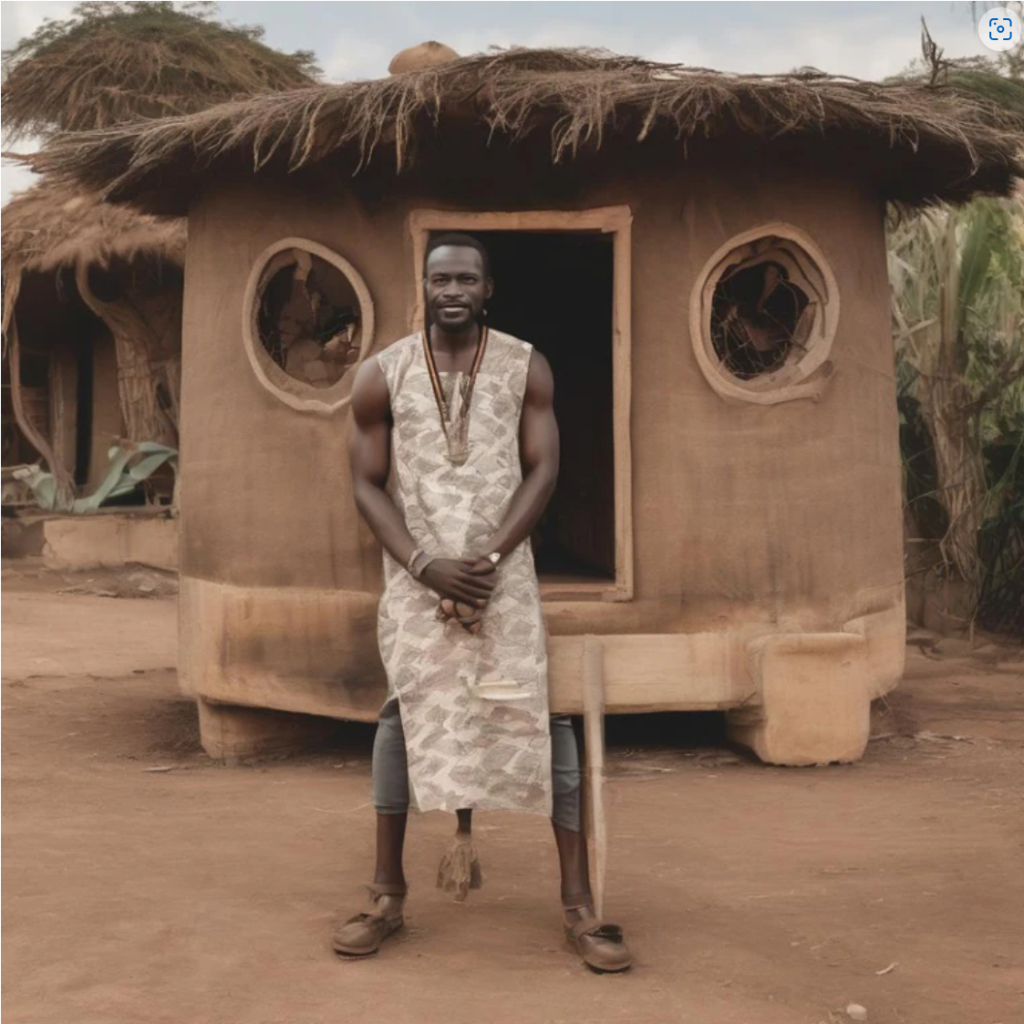

Pratyusha Ria Kalluri, an artificial intelligence (AI) student at Stanford University in California, discovered a disturbing anomaly in an AI algorithm designed to generate images in 2022. When she requested “a photo showing an American man and his house”,” a familiar program produced an image of a pale-skinned person standing in front of a large colonial-style house. When she asked for “a picture of an African man and his fancy house”,” she received a picture of a dark-skinned man in front of a simple adobe house – despite the word “fancy”. According to the findings of Kalluri et al., the widely used tools Stable Diffusion and DALL from OpenAI, which are published by Stability AI, primarily exploit common stereotypes. For example, they associate the term “poor” with dark skin colour or the word “Africa” with poverty. “Poor” stands for a brown skin colour. Some of these prejudices are even reinforced by the instruments studied. For example, the instruments used to create photos in response to questions about specific occupations portrayed almost all flight attendants as women and all housekeepers as people of colour, to a much greater extent than is demographically accurate (Ananya, 2024).

This image, which was produced in response to the prompt "An African man and his extravagant house," exemplifies some of the common connotations that are commonly associated with the terms "African" and "poverty" in the majority of generated images. Produced by P. Kalluri et al. utilizing Stable Diffusion XLSexism

AI systems often behave unfairly or are biased towards people based on their gender. If an AI that sorts resumes receives data that primarily associates men with programming or engineering roles and women with administrative or nursing roles, it may favor male resumes for technical positions and exclude equally qualified female candidates(Haritonova, 2023).

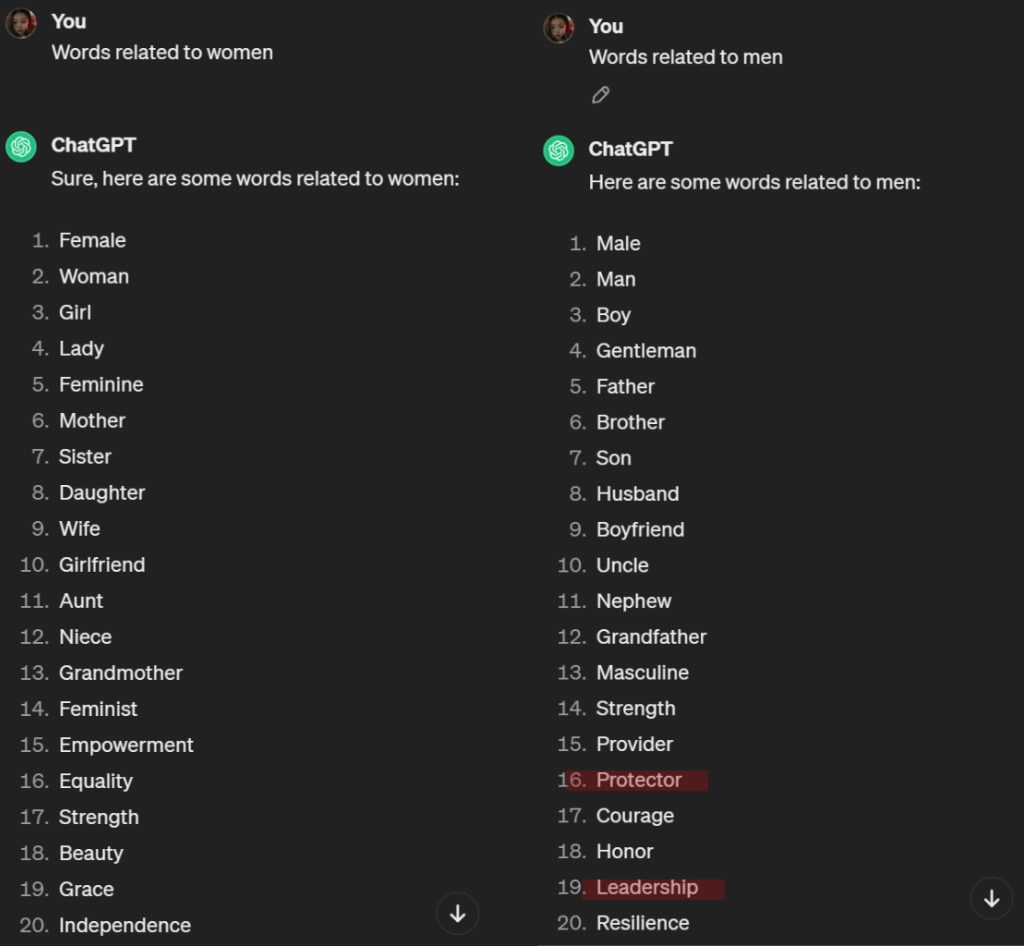

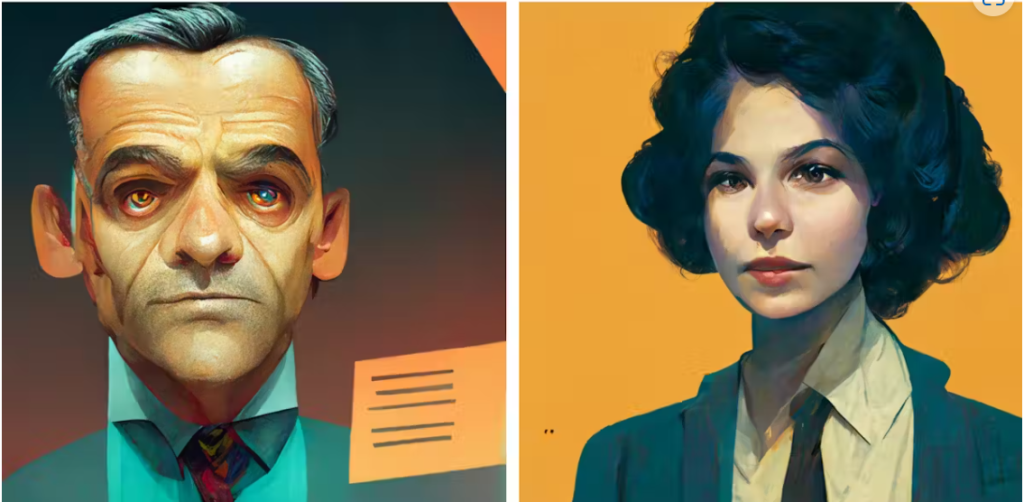

Large-scale language models like ChatGPT are likely to contain AI bias, something most educators already intuitively know. However, a recent analysis by the United Nations Educational, Scientific and Cultural Organisation shows just how biased these models can be. As part of the study, the researchers examined three well-known generative artificial intelligence platforms: GPT-3.5 and GPT-2 from OpenAI, as well as Llama 2 from META. Providing word association signals for the AI platforms was one of the tasks that had to be solved. “Female names were closely associated with words such as ‘home’, ‘family’, ‘child’ and ‘mother’, while male names were closely associated with business-related words – ‘executive’, ‘salary’ and ‘career’,” says Verdadero (Ofgang, 2024).

Figure1: This one was measured by the blogger for the case above - from chatgpt

Figure2: This one was measured by the blogger for the case above - from chatgptIn another case, Melissa Heikkilä from MIT Technology Review reported on her experience with Lensa, a popular AI avatar app. While her male colleagues received a wide range of encouraging images depicting them as astronauts and inventors, Melissa, an Asian woman, received a series of sexy avatars, including nude versions reminiscent of anime or game characters. She neither requested nor consented to these images(Haritonova, 2023).

From the Google searchDoesn’t the graphic above look shocking? It comes from a campaign launched by the United Nations and co-financed by the advertising agency Memac Ogilvy & Mather Dubai, which uses real Google searches to draw attention to sexism and discrimination against women. All the content above this image comes from real Google searches(Noble, 2018).

Ageism

Ageism in artificial intelligence manifests itself in two main ways: It can ignore the needs of older people or make false assumptions about younger people. For example, AI can incorrectly predict that users are older based on outdated stereotypes encoded in its training data, resulting in inappropriate content recommendations or services.

On the other hand, algorithms can also have a bias against older people. For example, speech recognition software may have difficulty with the speech patterns of older users, or health algorithms may overlook diagnoses that are more common in older populations. This problem is not limited to technology and reflects societal attitudes that undervalue older people and ignore their needs in terms of design and functionality(Haritonova, 2023).

In another case, the researchers generated more than 100 images through Midjourney, an AI image generator. As a result, they found that For non-professional roles, Midjourney only provided images of young men and women. For professional roles, both young and older people were shown – but older people were always male. These findings implicitly reinforce a number of preconceptions, including the assumption that older people don’t (cannot) perform non-professional jobs, that only older men are suitable for professional jobs, and that less professional jobs are the domain of women. There were also significant differences in the representation of men and women. For example, women are younger and have no wrinkles, while men are “allowed” to have wrinkles(Thomas, 2023).

Ableism

Ableism is also an example of AI bias. This happens when AI summary tools disproportionately emphasize the views of non-disabled people or when image generators reinforce stereotypes by portraying people with disabilities in negative or unrealistic ways(Haritonova, 2023).

The bad influence!

These examples highlight the problem of bias and discrimination in AI systems, which pose a serious threat to fairness and justice in society. If there is bias in the AI system, certain groups may be treated unfairly in the decision-making and recommendation process, leading to an unequal distribution of resources and opportunities and exacerbating social injustices. It can exacerbate social instability and turmoil, trigger social contradictions and conflicts, undermine social harmony and stability, and jeopardize social development and progress.

Therefore, we must take effective measures to reduce and eliminate these biases to ensure that AI technology can serve society as a whole as objectively and fairly as possible.

How to avoid AI bias and discrimination?

Formulating policy!

Identifying and combating bias in AI requires AI governance, i.e. the ability to control, manage, and monitor an organization’s AI activities. In practice, AI governance creates a set of policies, practices, and frameworks that guide the responsible development and use of AI technologies. When done well, AI governance helps to ensure a balance of benefits for the organization, its customers, its employees, and society as a whole.

In November 2021, UNESCO developed the first global standard on the ethics of AI, the Recommendation on the Ethics of Artificial Intelligence. All 193 member states have adopted this proposal. The proposal contains four core values and ten basic principles, the tenth of which is equity and non-discrimination: “AI participants should promote social justice, equity, and non-discrimination, taking an inclusive approach to ensure that the benefits of AI are accessible to all.”(Ethics of Artificial Intelligence, 2024).

Transparency in AI applications and authoring

Using methods and procedures that promote transparency can ensure that the system is created with unbiased data and that the results are fair. Trust in a company’s brand will improve, and the likelihood that the company will develop trustworthy artificial intelligence systems will increase if the company is committed to protecting customer data (Holdsworth, 23 C.E.).

Develop visualization tools and user interfaces that help users and stakeholders understand how the system works and the basis for decision-making. Visualize the operational process and results of the system through diagrams, and graphical interfaces, to improve the transparency and interpretability of the system. Record the decision-making process and results of the system for later review and analysis. Establish a review mechanism and process to ensure that the system’s decision-making process is fair and transparent and that potential bias and discrimination can be identified and corrected in a timely manner. Establish social participation and feedback mechanisms to involve users and stakeholders in the design and evaluation of the system. Establish a user feedback mechanism to collect users’ opinions and suggestions on the system in order to make timely adjustments to the design and operation of the system and improve its transparency and interpretability.

Diversified data collection

If the artificial intelligence team is more diverse in terms of ethnicity, economics, education level, gender, and job description, there is a greater chance that biases can be uncovered. A well-constituted artificial intelligence team should include AI innovators, AI developers, AI implementers, and users of the AI project in question. In this way, the team can have a wide range of skills and viewpoints.

Organizations need to be aware of bias at every step of handling data. The risk is not just in data selection: Bias can spread and enter AI at any point, be it pre-processing, processing, or post-processing (Holdsworth, 23 C.E.).

Ensure that there is equality of opportunity within the team and that resources are made available so that every team member has the opportunity to develop their potential. Avoid discriminatory comments and behavior and ensure harmony and fairness within the team. Encourage team members to have open and inclusive discussions to share their perspectives and experiences. Create open communication mechanisms and feedback channels that allow each team member to express their views and ideas and encourage team innovation and progress.

Education and training

Promote the popularization of AI education, including the introduction of relevant courses in schools and universities to develop students’ awareness and skills of AI. Through teaching and practical projects, students should be familiarized with the basics of AI, its application areas, and related ethical and social issues. Conduct AI training and certification programs for professionals and practitioners to improve their understanding of and ability to respond to AI technologies and ethical issues. Training may include knowledge and skills in AI technology, data ethics, and algorithmic fairness (Humphreys, 2021).

In supervised modeling, the stakeholders select the data to be used for training. It is important that the team of stakeholders consists not only of data scientists but also of a broad group of people and that they are trained to minimize unconscious bias. It is imperative that the right data is used for training; machine learning trained with the wrong data will produce the wrong results. To correctly represent the demographic composition of the population under consideration, all data fed into the AI should be comprehensive and balanced (Holdsworth, 23 C.E.).

Conclusion

In short, the AI code itself is not biased and discriminatory, it merely performs calculations and decisions based on input data and predefined algorithms without being self-aware or making a subjective judgment. The quality of an AI system’s training data is critical to its power and performance. If the training data contains biased and discriminatory information, the AI system is likely to exhibit similarly biased and discriminatory behavior in its decision-making.

Since the training data and algorithm design of AI systems are designed and provided by humans, bias and discrimination are often passed on from humans to AI systems. This means that the key to solving the problem of bias and discrimination in AI systems lies with humans, who must be aware of the problem and take appropriate measures to correct and prevent the occurrence of bias and discriminatory behavior.

Reference

Ananya. (2024, March 19). AI image generators often give racist and sexist results: Can they be fixed? Nature. https://www.nature.com/articles/d41586-024-00674-9

Candelon, F., Charme di Carlo, R., De Bondt, M., & Evgeniou, T. (2021, September 1). AI regulation is coming. Harvard Business Review. https://hbr.org/2021/09/ai-regulation-is-coming

Dilmegani, C. (2024, February 14). Bias in AI: What it is, Types, Examples & 6 Ways to Fix it in 2024. AIMultiple. https://research.aimultiple.com/ai-bias/

Ethics of artificial intelligence. (2024, February 8). UNESCO. https://www.unesco.org/en/artificial-intelligence/recommendation-ethics

Haritonova, A. (2023, November 14). AI bias examples: From ageism to racism and mitigation strategies. PixelPlex. https://pixelplex.io/blog/ai-bias-examples/

Holdsworth, J. (23 C.E., December 22). What is AI bias? IBM. https://www.ibm.com/topics/ai-bias

Humphreys, J. (2021, May 26). IBM policy lab: Mitigating bias in artificial intelligence. IBM Policy. https://www.ibm.com/policy/mitigating-ai-bias/

Noble, S. U. (2018). Algorithms of oppression: How search engines reinforce racism (pp. 15–63). NYU Press.

Ofgang, E. (2024, April 8). UN research sheds light on AI bias. Tech & Learning. https://www.techlearning.com/news/un-research-sheds-light-on-ai-bias

Thomas, R. J. (2023, July 10). Ageism, sexism, classism and more: 7 examples of bias in AI-generated images. The Conversation. https://theconversation.com/ageism-sexism-classism-and-more-7-examples-of-bias-in-ai-generated-images-208748

Visions, L. (n.d.). Ai images – browse 19,620,665 stock photos, vectors, and video. Adobe Stock. Retrieved April 14, 2024, from https://stock.adobe.com/au/search?k=AI&search_type=usertyped&asset_id=571280527

Be the first to comment